DORA Resilience Checklist for Testing (2026 Guide)

Digital Operational Resilience Act (DORA) testing is often misunderstood as a single annual exercise. In practice, supervisors typically expect a repeatable testing program that is risk-based, evidenced, and governed, with clear links to your critical or important functions, ICT assets, and ICT third-party service providers (ICT TPPs). This dora resilience checklist is designed for compliance officers, CISOs, and ICT risk managers who need a defensible way to plan, execute, and evidence testing across the year. If you need a foundation on scope and terminology first, start with what is digital resilience, then use this checklist to pressure-test your current approach against DORA’s operational expectations.

Contents

What this checklist covers (and what it does not)

This checklist focuses on how to operationalize DORA-aligned digital operational resilience testing in a way that stands up to audit and supervisory review. It is structured around the practical problem most financial entities face: testing outputs are often scattered across ticketing tools, penetration test reports, vendor emails, BCM evidence folders, and spreadsheets, while DORA expects coherence across governance, risk, third-party oversight, and continuous improvement.

It does not replace your internal control framework, your detailed security testing methodology, or any threat-led penetration testing (TLPT) program design decisions. TLPT has specific constraints and expectations under DORA and related RTS; this checklist helps you ensure the surrounding governance and evidence chain is complete.

For deeper context on the types of testing and expected program structure, see digital operational resilience testing and dora digital resilience testing. If your stakeholders still need clarity on the regulation itself, align on definitions using digital operational resilience act and what is digital operational resilience act.

1) Set the foundations for DORA testing

A DORA testing program is usually easiest to defend when it is anchored to governance decisions and a documented risk-based rationale. Supervisors may look for clarity on “why these tests,” “why this depth,” and “how you ensured coverage of what matters.”

Checklist: governance and policy prerequisites

Practical considerations

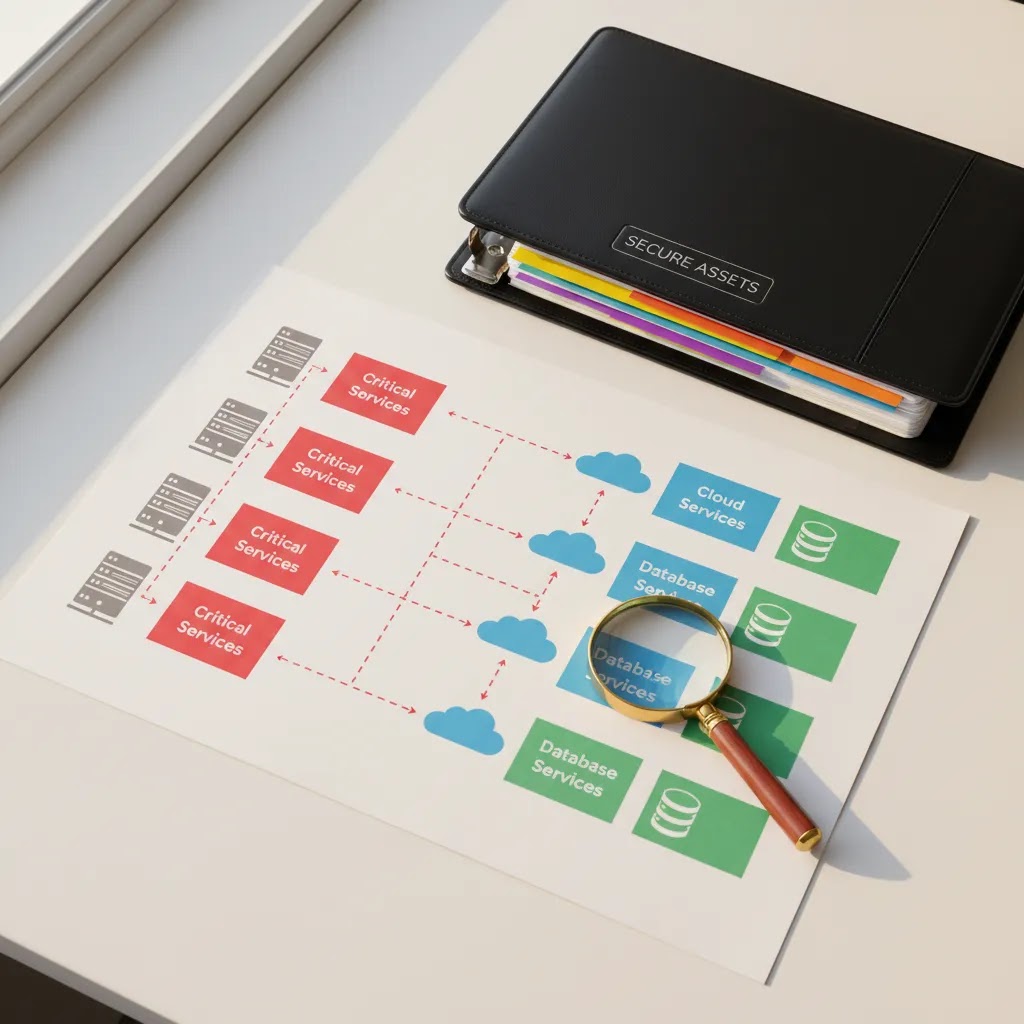

2) Build the scope and inventory you will test against

Most testing programs fail defensibility because the scope is implicit. DORA pushes financial entities toward demonstrable understanding of dependencies, including ICT TPP reliance, and how that reliance affects operational resilience.

Checklist: minimum scoping artifacts to link testing to

Practical considerations

3) Create a risk-based testing plan (not a calendar ritual)

A credible testing plan is typically a portfolio: different test types, different frequencies, and different depth based on the operational importance of the service and the risk profile. Supervisors may expect you to show that you prioritized testing where impact is highest, and that you did not rely on a single control activity to “cover everything.”

Checklist: build the annual testing portfolio

Practical considerations

4) Execute tests with governance, evidence, and traceability

Execution quality is not only about the test itself. For DORA defensibility, you typically need evidence that tests were authorized, that scope was controlled, that results were reviewed, and that actions were tracked to closure.

Checklist: execution controls that improve audit readiness

Practical considerations

5) Manage findings, remediation, and retesting

DORA-oriented supervision tends to focus on continuous improvement. That makes remediation discipline and retesting evidence particularly important for demonstrating “resilience outcomes,” not only activity completion.

Checklist: findings lifecycle and closure criteria

Practical considerations

6) Address ICT third-party and supply-chain testing expectations

DORA places explicit emphasis on ICT third-party risk management. While DORA does not require you to “penetration test your provider” in every case, you will typically need a defensible assurance approach, proportionate to the service criticality and provider dependency chain.

Checklist: third-party testing and assurance

Practical considerations

7) Prepare management and supervisory-ready outputs

Management bodies and competent authorities rarely want raw test artifacts. They typically want evidence that the testing program covers critical services, produces actionable results, and drives measurable remediation within agreed timelines.

Checklist: reporting outputs that tend to be requested

If stakeholders still debate baseline resilience concepts, align terminology using what is digital resilience to reduce misinterpretation between “security testing” and “operational resilience testing.”

DORA testing minimums, proportionality, and TLPT triggers (what supervisors may look for)

Here’s the thing: DORA testing expectations are not only about running more tests. What the regulation actually requires is that your digital operational resilience testing program is risk-based, proportionate, and capable of demonstrating that ICT systems and controls can support continuity of critical or important functions under stress. These expectations sit under Chapter IV of Regulation (EU) 2022/2554, and they are further shaped by Regulatory Technical Standards and Implementing Technical Standards developed by the European Supervisory Authorities (EBA, ESMA, and EIOPA) through the Joint Committee.

DORA has applied since 17 January 2025. In supervisory reviews, the question is often whether your approach can be justified end-to-end: your scoping rationale, test selection, evidence standards, and how testing outcomes drive remediation and risk decisions.

Checklist: “minimum defensibility” signals supervisors may expect

How TLPT typically fits into a DORA resilience checklist

Threat-Led Penetration Testing is a distinct requirement for certain financial entities, typically those designated as significant under the DORA framework and subject to competent authority expectations. TLPT is not “just a pen test,” it is intelligence-led, scenario-driven, and designed to test detection and response capabilities against realistic threat actors, often with strict controls on scope, execution, and oversight.

Whether TLPT applies to you, and the exact execution requirements, could depend on the applicable RTS and supervisory interpretation. Even when TLPT is not mandatory for your institution, supervisors may still question whether your testing portfolio is sufficiently challenging for your highest-risk service chains.

Regulatory note

This content is for informational purposes only and does not constitute legal advice. Your TLPT applicability, testing frequency, and governance expectations may vary based on your entity type, risk profile, and competent authority guidance. Consult qualified legal or regulatory counsel for institution-specific guidance under Regulation (EU) 2022/2554 and applicable RTS/ITS.

Evidence mapping: how to tie tests to DORA Articles, RTS/ITS expectations, and your audit trail

What many compliance teams overlook is that “testing evidence” is not a single artifact. Under DORA, evidence is the traceability chain that lets you demonstrate, under challenge, that tests were planned and executed as part of a controlled program, and that outcomes were acted on. In most institutions, audit issues arise because evidence exists, but it is not mapped to DORA obligations, not linked to critical services, or not presented in a reviewable structure.

From a practical standpoint, your evidence model should be designed so you can answer three supervisory questions quickly: what did you test, why was that sufficient for the risk, and what changed as a result.

Checklist: a practical evidence map to maintain

How this helps in a supervisory review

Regulatory note

This content is for informational purposes only and does not constitute legal advice. Evidence expectations can vary by competent authority, entity type, and supervisory focus, and may be influenced by RTS/ITS specifications and ESA guidance. Consult qualified legal or regulatory counsel for institution-specific advice.

Where testing connects to major ICT-related incident reporting under DORA

DORA treats resilience testing and ICT-related incident management as connected capabilities. Under Chapter III of DORA, financial entities must have processes to manage, classify, and report major ICT-related incidents, and may voluntarily notify significant cyber threats. Testing is one of the most credible ways to prove that your detection, response, and recovery capabilities are not only documented, but exercised and improved.

Consider this: if your incident reporting playbooks assume certain telemetry, escalation paths, or recovery steps, your testing program is where those assumptions should be challenged. If you cannot evidence that critical incident workflows have been tested against realistic scenarios, you can end up with a gap between “policy compliance” and “operational readiness,” which supervisors may probe after an incident.

Checklist: align testing outcomes with incident reporting readiness

Practical considerations

Regulatory note

This content is for informational purposes only and does not constitute legal advice. Major incident classification, reporting timelines, and content requirements may depend on the final ITS and competent authority processes applicable to your institution. Consult qualified legal or regulatory counsel for institution-specific guidance under Regulation (EU) 2022/2554 and ESA standards.

How Dorapp supports evidence-driven DORA programs

Dorapp is a DORA-focused compliance platform designed to help financial entities run controlled, auditable workflows rather than relying on email and spreadsheets. Based on currently available platform documentation, Dorapp is modular and includes DORApp ROI (Register of Information) and DORApp TPRM (Third-Party Risk Management and Questionnaire Automation). Additional modules are on the roadmap, including DORApp IM (Incident Management and Reporting), DORApp RMG (ICT Risk Management and Governance), and DORApp IIS (Information and Intelligence Sharing). Dorapp also describes an Execution Governance Engine with configurable review gates and sign-off, plus an Audit Trail for workflow and record changes.

For testing programs, the practical value is typically in traceability: linking what you tested, what you found, who approved decisions, and how third-party dependencies affected scope and remediation. If you are building a program that must be repeatable and supervisor-ready, it can be worth evaluating whether a DORA-dedicated workflow layer reduces evidence gaps and rework versus spreadsheet-based coordination.

To explore Dorapp directly, you can review the DORApp Modules, see DORApp Functions, or book a demo to walk through how Dorapp structures DORA evidence and approvals in practice.

Strengths and Challenges

Strengths

Implementation Considerations

Frequently Asked Questions

What does DORA require for digital operational resilience testing?

DORA expects financial entities to run a risk-based testing program that validates ICT resilience and security controls across critical or important functions and supporting ICT assets. In most cases, this means multiple test types across the year, not a single annual exercise. Specific supervisory expectations can depend on your entity type, size, and risk profile, and relevant RTS may shape how certain testing activities are evidenced and governed.

How is TLPT different from standard penetration testing under DORA?

Threat-led penetration testing (TLPT) is typically more scenario-driven and intelligence-led than standard penetration testing, and it is commonly treated as a higher-assurance activity. Whether TLPT applies to your financial entity depends on the supervisory designation and applicable RTS. Even if TLPT does not apply, you still need a coherent testing portfolio, evidence of governance, and closure discipline for findings.

What evidence should we retain from resilience tests?

Evidence usually needs to cover authorization, scope, execution artifacts, findings classification, review and approvals, remediation actions, and retesting results. The goal is to show traceability from test intent to outcome and improvement. Evidence retention and detail should be proportionate, but for critical services you typically need enough granularity to support audit challenge and demonstrate management oversight decisions.

How do we link testing to critical or important functions?

Most institutions start by mapping critical or important functions to the ICT services, applications, and infrastructure that enable them, then linking tests to those service chains. The testing plan can then show coverage by function, including gaps and exceptions with rationale. Where third-party services support the chain, your assurance approach should be integrated into the same coverage view rather than stored separately.

What are common weaknesses supervisors may identify in testing programs?

Common issues include unclear scope, weak linkage to critical services, lack of approval trail, findings that do not convert into tracked remediation, and inconsistent retesting before closure. Another recurring issue is fragmented third-party assurance that is not integrated into the institution’s risk decisions. Supervisory focus may evolve as the European Supervisory Authorities (EBA, ESMA, and EIOPA) publish further guidance.

How should third-party testing be handled under DORA?

You typically need a proportionate assurance approach for ICT third-party service providers, especially where they support critical or important functions. That may include contractual assurance, control reports, resilience evidence, and structured follow-up on weaknesses. The feasibility of direct testing can vary, particularly with large cloud or SaaS providers, so institutions often combine multiple assurance mechanisms and document compensating controls.

How often should we test under a “DORA resilience checklist” approach?

Frequency is usually risk-based. Critical services and high-risk technology stacks often warrant more frequent testing and more rigorous retesting of high-severity findings. Lower-risk services may follow lighter cycles. What matters is that you can justify the frequency, show that it is reviewed, and demonstrate that testing results drive remediation and governance decisions rather than being “check-the-box” outputs.

How can a platform help with DORA testing compliance if tests are executed in other tools?

Even when tests are executed in specialized tools, financial entities still need governance, evidence management, sign-off, exception handling, and reporting that ties results back to services and providers. A DORA-oriented platform can help centralize those workflows and create consistent audit trails. It should be evaluated on its ability to enforce review gates, maintain traceability, and support management reporting without overstating automation.

Is Dorapp a replacement for our existing GRC or security testing stack?

Not necessarily. Based on available documentation, Dorapp is positioned as a DORA-focused platform with modules for Register of Information and third-party risk management, and with an execution governance approach for controlled workflows and evidence. Many financial entities will still keep their existing GRC, security testing, and ticketing systems. The practical question is whether Dorapp can reduce DORA-specific fragmentation and evidence gaps.

What are the “5 pillars” of DORA, and where does testing sit?

DORA is commonly explained through five areas: ICT risk management, ICT-related incident management and reporting, digital operational resilience testing, ICT third-party risk management, and information and intelligence sharing arrangements. Testing sits alongside governance, incident management, and third-party oversight, and supervisors often assess whether these areas reinforce each other rather than operating as separate workstreams.

Is vulnerability scanning alone enough to satisfy DORA testing expectations?

Typically not. Vulnerability scanning is useful for identifying known weaknesses, but DORA’s testing expectations are broader and risk-based. Most institutions need a portfolio that includes at least some combination of technical testing (for example: penetration testing or configuration review) and operational resilience exercises (for example: backup restore and recovery testing, incident response exercises), proportionate to the criticality of the service.

How do we decide whether we are “significant” for TLPT purposes under DORA?

Whether TLPT applies can depend on the criteria and designation processes applied by competent authorities and the relevant RTS. In practice, you typically need to engage your compliance, risk, and regulatory affairs functions to determine your status, confirm supervisory expectations, and document your rationale. This content is for informational purposes only and does not constitute legal advice. Consult qualified legal or regulatory counsel for institution-specific guidance.

Does DORA require annual testing of critical systems?

DORA establishes a programmatic testing expectation under Chapter IV rather than a single uniform frequency for every system. Supervisors commonly expect regular testing for critical services, and many institutions operate at least annual cycles for key control validations, with more frequent testing for higher-risk areas. Your defensible position is the documented rationale for frequency and depth, plus evidence that it is reviewed and updated.

Key Takeaways

Conclusion

A practical digital operational resilience checklist is less about enumerating test types and more about proving governance: what you tested, why you tested it, what you found, who approved decisions, and how you improved resilience outcomes across services and ICT third parties. If your current evidence is split across tools and teams, that fragmentation can become the real compliance risk during audit or supervisory review.

If you want to assess whether a DORA-focused workflow layer could help your institution structure testing evidence and approvals more consistently, review the DORApp Functions and DORApp Modules, or book a demo to discuss your testing governance and documentation approach with the Dorapp team.

Disclaimer: This article is intended for informational purposes only and does not constitute legal advice. DORA compliance obligations vary depending on the classification and size of your financial institution. Consult qualified legal or regulatory counsel to assess your specific obligations under the Digital Operational Resilience Act and applicable regulatory technical standards.

About the Author

Matevž Rostaher is Co-Founder and Product Owner of DORApp. He brings deep experience in building secure and compliant ICT solutions for the financial sector and is positioned by DORApp as an expert trusted by financial institutions on complex regulatory and operational challenges. DORApp’s own webinar materials list him as CEO and Co-Founder of Skupina Novum d.o.o. and CEO and Co-Founder of FJA OdaTeam d.o.o. His articles should carry the voice of someone who understands not just compliance requirements, but the systems and delivery realities behind them.